Sturdy Site Architectures: Optimizing Your Internal Linking Strategy

The strongest sites are those that have a tightly knit architecture of interlinked pages. Each linked page is working towards boosting their individual rankings, thus pulling up the domain’s authority as a whole. For B2B marketers and businesses, such a strong internal linking strategy is a must. B2B businesses are about selling and relating to their customers in ways very different from B2C companies. With this in mind, it makes perfect sense that a strongly linked site is best for making new conversions and serving existing B2B customers.

You know that a strong internal linking strategy is important for B2B, but how do site pages become so sturdily constructed? The answer actually lies with you.

You may think you need to hire some Silicon Valley software engineer to remedy your site issues, but this is not the case. While professional help doesn’t hurt, you can actually relax and put your wallet away. The truth is, you can improve the connectivity of your site and improve the ranking of your pages along with it.

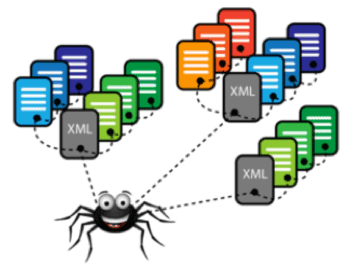

Improving the architecture of your site for better B2B relations does need some basic HTML know-how. Yet, with the right tools and metrics, your pages can attract traffic and conversions. Keeping these tips in mind will clean up your site for the search engines. Ideally, they will also improve your user experience. If successful, this will promote repeat visitors and conversions. Think of it like a well-crafted spider’s web. You want your site to be strongly linked so that the spiders (search engines) can easily crawl through it. In addition, you also want to catch flies (visitors and conversions) in your sticky web. Your internal linking strategy is like building a strong spider’s web. This post will show you how and why a strong B2B internal linking strategy catches more spiders and flies over time.

Impressing the Search Engine

Some B2B companies are looking individual page authority. Others are looking to improve domain authority. Wherever you fall, you need to look at this obstacle through a search engine’s eyes.

Think of it this way:

Each B2B company is the desperate high school freshman pining for the prettiest girl in school. Google is always that girl. To get her and potential B2B conversion’s attention, you must improve your site.

Many B2B content marketers don’t know that the user’s view of your page is actually different from that of the search engine. Even if you have a beautiful landing page with brilliant content and copy, you can still wind up with a low ranking. This is because the site is not set up where B2B customers can access the content they want and need. If they can’t get to your content, they aren’t going to be able to record its value, and your ranking is going to suffer.

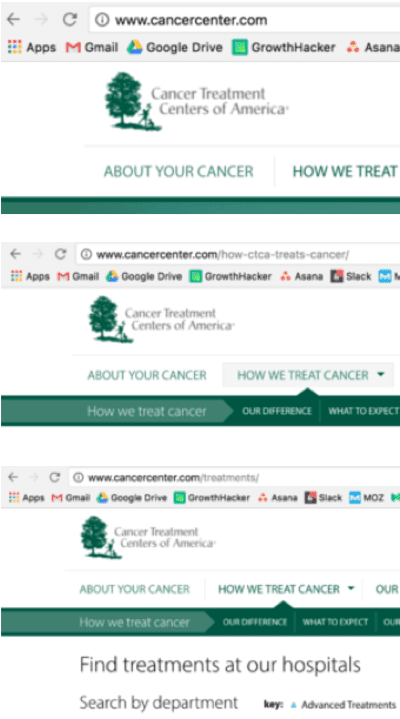

Here’s an example of the difference between what your page looks like to the viewer and what it looks like to the search engines:

There are plenty of DIY software and sites to help create and identify effective page formats for search engines. For example, Moz’s Open Site Explorer provides metrics on the influence and linking profile of your domain and specific pages. It focuses on domain authority and page link metrics, with plenty of filters for you to fine tune your analytics for B2B.

Screaming Frog is a site that will help you handle the actual architecture of your pages. The right architecture improves search-ability, response codes, and site depth, among other things.

To create a strong B2B site architecture, begin creating content with keyword intent. The keyword strategy is as important as an internal linking strategy. Together, the two create a better connected, more relevant site overall. It provides a focus for your content that ups B2B visibility and conversions. How does one create strong B2B keyword intent?

A well-interlinked site will use sub-folders. These should link to other keywords. These linked keywords are built from the long-tail keywords made from the original keyword strategy. The original keywords come from the sub-folder targeting the anchor text.

To strengthen organic B2B linkage between pages, your keywords must match. To match, or at least connect keywords, you have to streamline your keyword research. You can do so by developing a strong B2B long-tail keyword pattern. Using your page’s anchor text as a baseline, you can create relevant B2B keywords to center your internal linking strategy.

Anchor Text and Descriptors

Anchor text needs to be driven, but also controlled and organic. Google may notice if you are writing your anchor text for the sole purpose of driving up your page’s authority. If the search engine does notice, you can end up with a penalty instead of any viable improvement to your site. Rather than creating an excessive amount of exact match anchor text, try nuancing your pages to target keyword variation. The alternative draws the negative attention of analytic software, lowering visibility and conversions.

Check out the URLs for these different subpages of this well structured site (above).

You can boost the rankings of your pages by varying the anchor text. By targeting long tail keyword modifications to your original domain keyword, you can bring up domain authority as well. For example, a site focused on battling cancer would have its original domain keywords targeted towards short tail keywords related to “cancer treatment.” From here, the subfolder of other linking site pages should be based on long tail keyword modification. This means going from “cancer treatment,” to “diagnoses,” “treatment prices,” and “chemotherapy clinics.” This makes navigating the site easy for both potential B2B viewers and other users. Impressing both will ensure higher rankings.

Spiders and Crawl Space

Earlier, I likened a site with a strong B2B internal linking strategy to a web able to catch viewers and conversions. Much like a spider catching flies in its sticky web, your site can ensnare B2B users and searchers using the right tools. Let’s take this “web” idea a little further:

As mentioned, spiders are the web crawlers that search engines use to navigate and analyze your site’s infrastructure. These are the little buggers you need to impress. They are one of the reasons why link building and internal linking strategy are so important for B2B. Link building helps generate awareness and build authority of your best content. It may also spread the “link authority” to other important, interlinked pages on your site.

Now, the spiders navigate your site. They do so by “crawling” and “indexing” all the different links, content, images, and anchor text that make up the guts of your pages. The crawl space is the linkages between your different pages, on your pages, and to and from your pages. Cleaning the crawl space up – or streamlining your internal linking strategy – is how you can up the ranking of already-developed content. The easier it is for the spider to crawl, the easier it is for you to rank.

Bottom-Up Link Building

Link building is certainly a must of any B2B content marketing campaign. Yet, most content market focus on domain authority too much. This can cause marketers to abandon the page authority of their individual pages. You don’t want to PA to suffer while trying to bolster your DA. If you are looking to strengthen your web, you wouldn’t tighten the central knot again and again, expect the rest of your web to solidify on its own, would you? Hopefully knot (pun intended).

Building your B2B link profile with targeted anchor text to your individual pages will raise the PA for each of your pages. Placing your B2B content pages and subpages deeper in your pagination with authoritative links and keyword targeted anchor text produces a more pervasive upward trend. It builds domain authority by amassing a legion of strong page authorities. The central knot may be what holds your web together, but strengthening each individual strand with specific and deliberate attention is what turns your silk into silver.

Common Problems with Interlinking

There are some common issues that sites run into when optimizing their internal linking strategy. Most of it is chalked up to coding misappropriation. Some of the credit goes to a failure to trim the fat of your page architecture. A site filled with loose links leading to nowhere and random clumps of knotted links isn’t going to stand the test of time. In fact, it won’t even get ranked highest among the other B2B sites.

There are three major issues in any interlinking project that will cause you some grief:

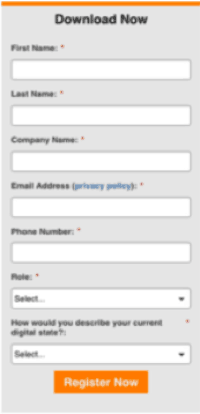

1. Gated Content

For the most part, your content should be available ready-at-hand to users. Good site navigation means your B2B content is open and ready for exploration. Sometimes, you might offer content to users in exchange for information or registration. If users have to fill out a form or survey to access the content you are linking to, they’re likely to pass over it. One way to get around this issue and allow your content to be indexed is to use your gated content for inspiration. This content can lead to blog posts and other pieces of content within your site.

2. Link Flooding

Another big problem that sites often run into is overlooking unnecessary linkage. Unnecessary links either slow your page down, confusing users, or completely missed. This can lead to serious problems, so you can’t let this issue sit. Instead, get down to work and try to remedy it straight away.

External Link flooding is exactly what it sounds like. When a page is overloaded with too many external links, it can waste the space in your link profile. This is a huge issue because that space could be better spent linking to your own individual pages. For sites with a large B2B link profile, cut all your external links to free up space for your own space. Make sure that if Google is being selective with how many links it follows, that it at least sees yours.

3. “No-follow” tags

You should also be checking your links for “nofollow” tags. This means that the site user won’t follow that link. If a spider can’t crawl that link it won’t find your content. If it can’t find that content, the content won’t be indexed, which means Google isn’t seeing the value of your piece.

This is a common mistake. Many B2B marketers find that software engineers accidentally write plugins that assign a “nofollow” tag. Even though it is common, you want to do your best to avoid this pitfall.

A prime example of this is “related posts” plugins in WordPress. Make sure to look at how these links are being sent to your blog content and that they are not a “no-follow.” In short, if you’re spending all that time and energy developing B2B content, make sure it gets seen!

Trapping Authority and Audience

The best B2B internal linking strategies are built around targeted keyword search results. These results must build off one another. They should connecting back to your original domain keyword and spreading out into long tail modifications.

Remember, that everything you link to is linking back to you as well. So be careful to only surround your links with strong authoritative pages with high rankings for the keywords you are targeting as well.

A quality B2B site, or web, is made of many strong strands, all knit together with no loose ends or wasted space. This is to say that, if spiders need a web to crawl on, give them a silk stage to dance on instead. Your web(site) will be trapping everything that touches it, from ranking, to authority, to audience, and conversions.